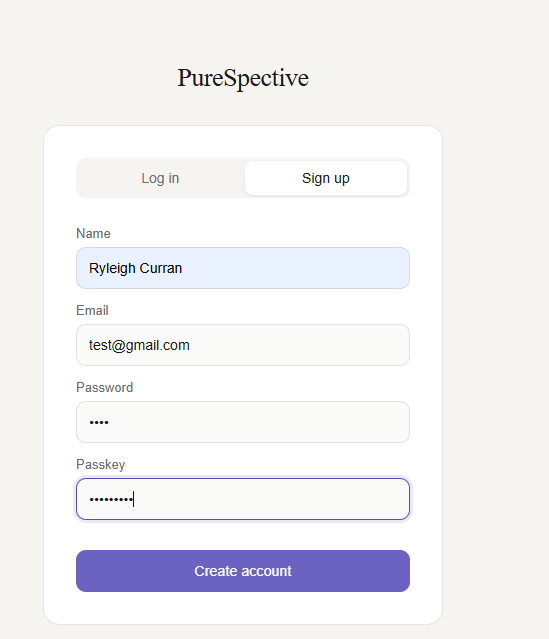

Sign Up Page

This is the user registration interface.

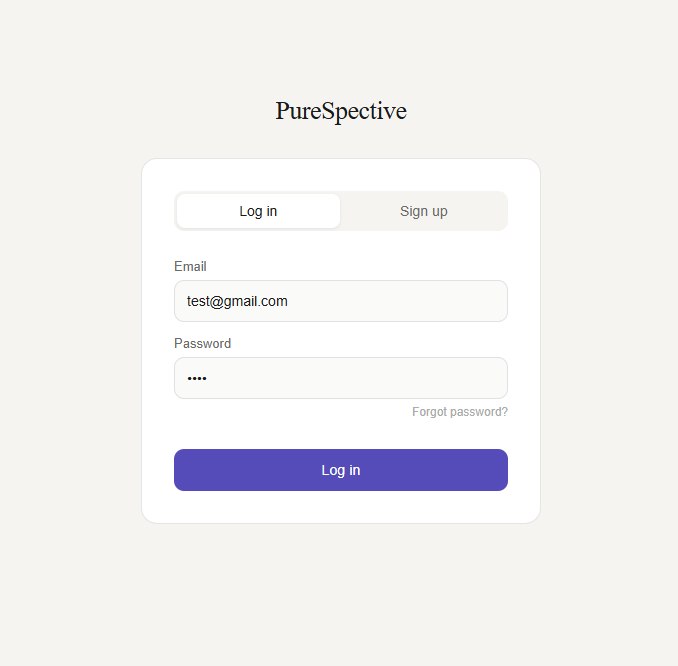

Login Page

Users can securely log into their accounts.

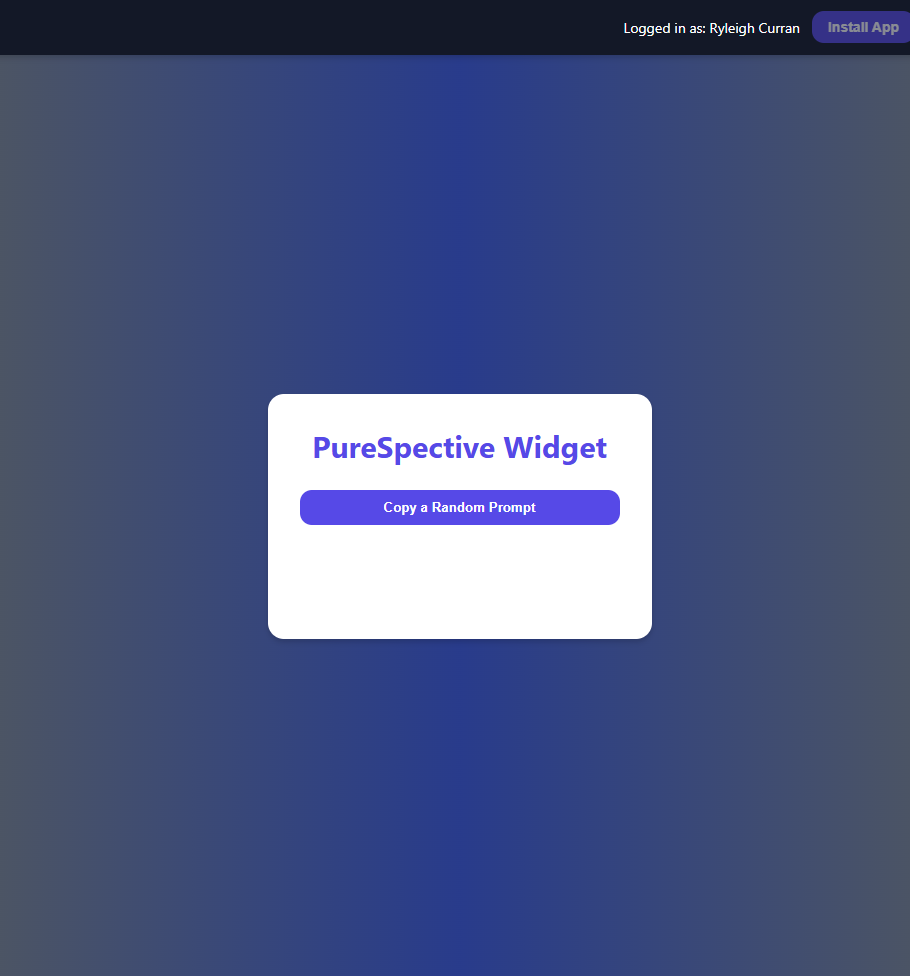

Successful Sign Up Notification

This confirms that the user has successfully created an account.

Random Prompt Feature

This screen shows the button users click to copy a random prompt.